$cat /proc/sys/net/ipv4/tcp_reordering 3 $

tcp_reordering - INTEGER Maximal reordering of packets in a TCP stream. Default: 3

proj-rep/kernel_code/tcp_ipv4.c 1921: tp->reordering = sysctl_tcp_reordering; 1922: nms.csail.mit.edu/~kandula/data/tcp-mult.tgz - Unknown - C irestarter-0.9.0/src/netfilter-script.c 271: fprintf (script, "# Set TCP Re-Ordering value in kernel to '5'\n"); 272: fprintf (script, "if [ -e /proc/sys/net/ipv4/tcp_reordering ]; then\n" 273: " echo 5 > /proc/sys/net/ipv4/tcp_reordering\nfi\n\n"); 274: packetstormsecurity.nl/.../firewall/firestarter/firestarter-0.9.0.tar.gz - GPL - C cifs-1.13/fs/cifs/file.s 64934: .LC2778: 64935: .string "NET_TCP_REORDERING" 64936: .LC873: de.samba.org/samba/ftp/cifs-cvs/cifs-1.13-2.6-bad.tar.gz - Unknown - Assembly

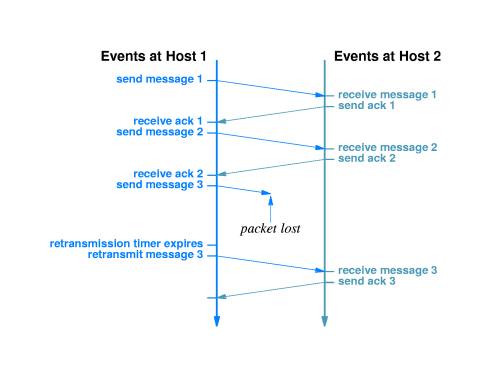

• Load splitting: To balance the load among the multiple paths, different packets of the same stream take different routes leading to different delays causing reordering. Problems caused by reordering are handled at different levels in TCP/IP suite. TCP allows adjustment of ‘dupthresh’ parameter, i.e., the number of duplicate ACKs to be allowed before classifying a following non-acknowledged packet as lost [3]. This parameter (also called tcp_reordering in Linux implementations) allows the reordering to occur to a certain extent without affecting the throughput. At application level, the out-of-sequence packets are buffered until they can be played back in sequence. An increase in out-of-order delivery however consumes more resources and also affects the end-to- end performance. Consequently, certain techniques attempt to reduce reordering at intermediate nodes, i.e., at IP level. A Comparative Analysis of Packet Reordering Metrics* Nischal M. Piratla1, 2 1 Anura P. Jayasumana1 Abhijit Bare1 Computer Networking Research Laboratory, Colorado State University, Fort Collins, CO 80523, USA 2 Deutsche Telekom Laboratories, Ernst-Reuter-Platz 7, D-10587 Berlin, Germany